P-values and statistical significance: New ideas for interpreting scientific results

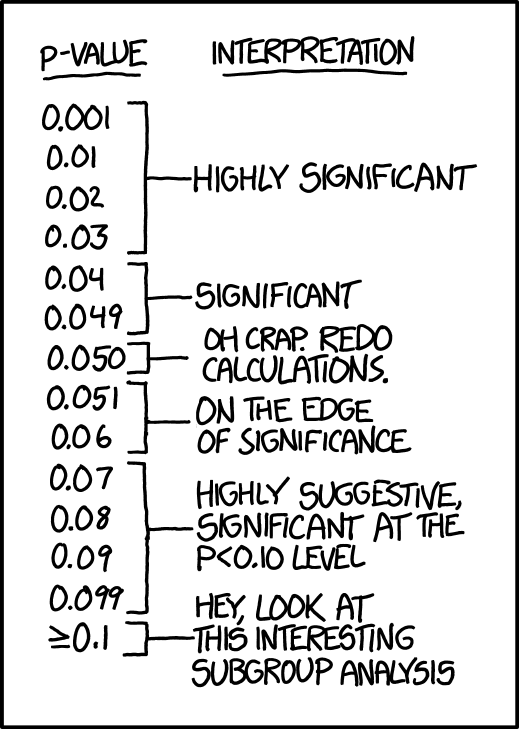

Comic from XKCD.

Experts are looking for more meaningful alternatives to statististical tests such as the often misleading and sometimes abused “p-value.”

When statistician Nicole Lazar published an editorial in The American Statistician earlier this year advocating changes in the way scientists handle the troublesome issue of statistical significance, her father—who trained as a sociologist—asked her, “Are you getting death threats on Twitter?”

Lazar, a professor of statistics at the University of Georgia, doesn’t use Twitter, but the question reveals how contentious the issue of statistical significance is. “You don’t often think about statisticians getting emotional about things,” Lazar told an audience of writers attending the Science Writers 2019 conference held in State College, Pa.,”but this is a topic that’s been raising a lot of passion and discussion in our field.” Lazar spoke on Oct. 27 as part of the New Horizons in Science briefings organized by the Council for the Advancement of Science Writing (CASW).

Many scientists determine whether the results of their experiments are “statistically significant” by using statistical tests that result in a number known as the “p-value.” A p-value of less than 0.05 is commonly considered significant, and often erroneously characterized as meaning the findings are not likely to be the result of chance. What the number actually reveals is less straightforward, and even scientists have trouble explaining the precise meaning of the p-value. Using the threshold of p < 0.05 has been shown to be problematic, misleading, and even dangerous. Lazar’s editorial, “Moving to a World Beyond ‘p < 0.05’,” discusses several possibilities that will give researchers alternatives to an arbitrary p-value cut-off.

Saying more about research results

Lazar’s editorial led a special issue of The American Statistician journal that includes 43 online open-access papers with ideas on how to move past this problem. In general, statisticians are not advocating getting rid of p-values altogether. Instead, their suggestions point to a practice of reporting more results and statistical information. “And not just what is significant and catchy,” says Lazar. With a higher level of transparency, she argues, more meaningful interpretations are possible.

Findings based on one simple metric are problematic, Lazar explained. They’re the reason for countless papers being retracted from scientific journals. When studies are replicated, significant results tend to disappear. In 2005, Stanford epidemiologist John Ioannidis made waves with an essay titled “Why most published research findings are false,” outlining many of the pitfalls of using, and abusing, p-values. Abusive practices include “p-hacking,” where scientists manipulate the data, testing different combinations of variables until they find a p-value less than 0.05. Ioannidis also argued that focusing on the p-value while ignoring the size of the study and the size of the effect shown is especially problematic.

To counter this malpractice, in 2016 the American Statistical Association formed a committee of experts to develop possible solutions. Subsequently, a 2017 symposium on statistical inference with scientists from various disciplines discussed possible alternatives. The many suggestions included adopting a lower p-value of 0.005 as the threshold for significance, or analyzing the effects on a spectrum instead of using arbitrary categories. “We don’t think there should be a one-size-fits-all solution.” Lazar says. “We think that different sciences, different disciplines are going to come to different solutions, and that’s okay.”

Keeping her science-writing audience in mind, Lazar made suggestions for how science journalists can better navigate the issue as well. In a culture where science news is often dominated by reports on a single scientific study with flashy findings, science journalists need to be skeptical about unexpected or counterintuitive results. Lazar cautioned that one paper with “statistically significant” results may not be reliable, and science writers should look for a convergence of results from multiple studies.

Can p-values be hazardous to your health?

Lazar shared an example of how relying too heavily on p-values can also be deadly. Earlier this year, biologist Janet Hapgood of the University of Cape Town consulted with Lazar about findings on relative risk of HIV infection between three different contraceptive methods in the ECHO Trial, a large epidemiological study involving 7,829 women.

Hapgood was concerned because the reported results had p-values slightly above 0.05, and so the researchers of the ECHO trial had concluded concluded that differences among methods were not significant. “They reached that conclusion because they are relying on the 0.05 threshold to do all their decision-making for them,” Lazar said.

As a result of the increased scrutiny of p-values, publication practices are already changing, with some journals requiring authors to avoid the “statistical significance” language. Besides journals, Lazar believes funding agencies need to encourage more in-depth analysis and reporting of results by requiring researchers to pre-register details of the statistical tests they plan to do for a given study.

Lazar is optimistic about what the future holds. “We’re in a good position for change, and I’m really happy to be talking to science writers because it’s all of us together, collectively, that are going to get us to this post-p < 0.05 world.”